Dunno ascii1/3/2024

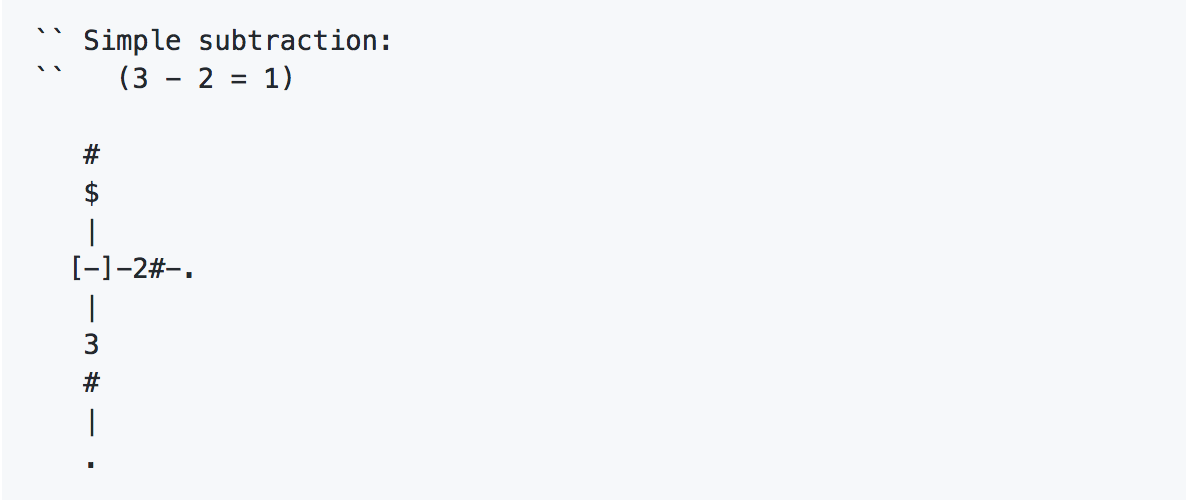

Token is a way to encode text, like ASCII or Unicode. This is a 2-parameter model:Īs you can see as the number of parameters grows, the function is able to represent more complex relationship between f(x) and x. For example let's say you have a function f(x). Q: More parameters your model has, more complex relationship it can represent. 1.5T tokens mean the training set has 1.5T tokens. 8K length means the size of input/output is 8K tokens. If you use LoRA's you can save each skill in a separate diff model just 1% the size of the base model and use a single GPU to fine-tune it, in a single day. You can get it to be better than stock GPT-4, cheaper, faster and private. So the recipe is: use an existing dataset, or make one with regular GPT-4 prompting and a bit of curation.

Salesforce XGen and a few other open-small-LLMs (funny how that sounds!) open the flood gates. Meta provided the training wheels - LLaMA, every company tried fine-tuning it for their purposes, but could not proceed for lack of a commercial base model. But if you don't already have it you can bootstrap with GPT-4 for a small sum. The problems with chatGPT are many - dependence on third party, privacy, externally imposed ideology and rules, cost, and most importantly - prompting is context-size limited and token-expensive, you can't pack much data into it.įine-tuning is a more powerful approach where you can actually fix the model problems instead of futzing around with the prompt and demonstrations. It's not intended to be used with general purpose prompting like chatGPT. There was a need for a small efficient pre-trained model to build on. The problem is that LLaMA is non-commercial. LLaMA models are great if you want to run your own models on your own systems, but only if you fine-tune them to specific tasks.

0 Comments

Leave a Reply.AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed